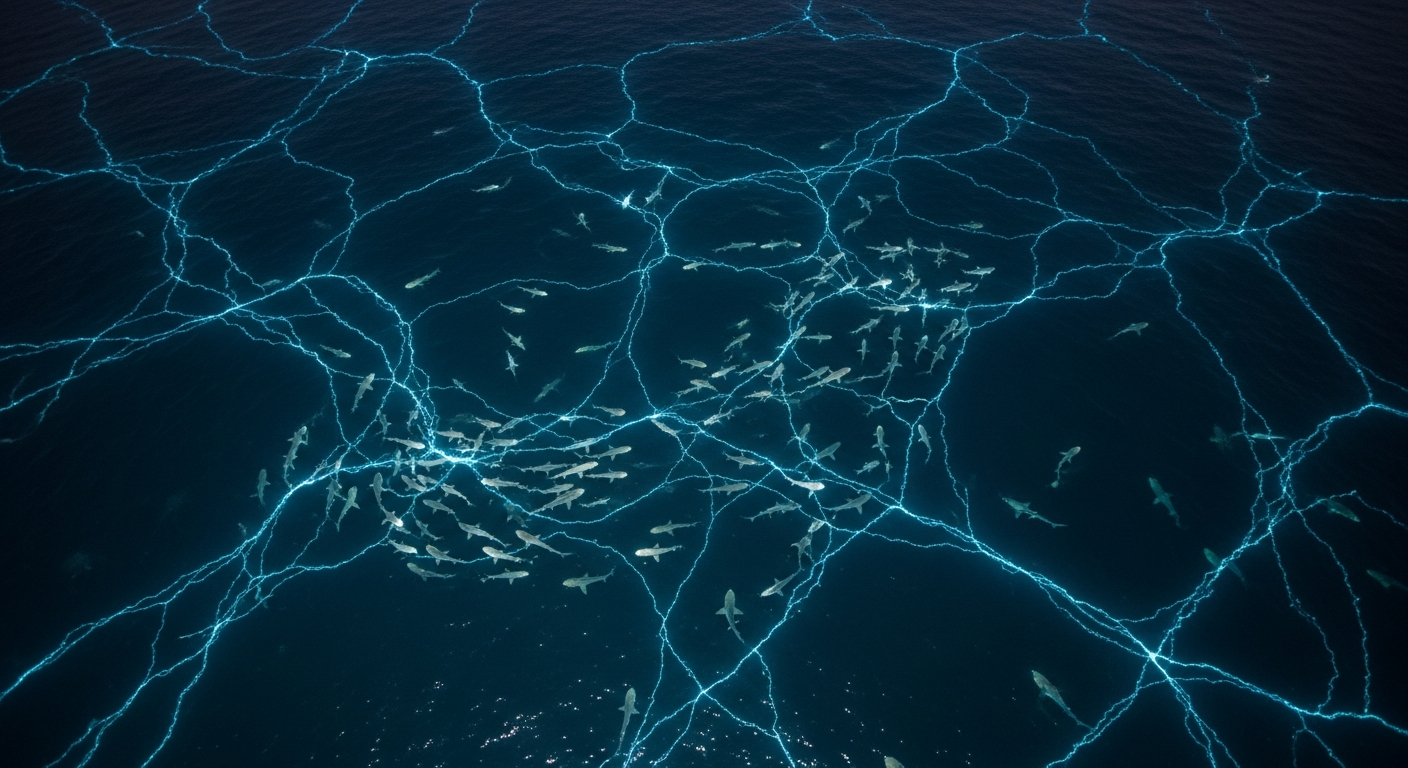

The 7 Pessimists Who Beat the Market: How MiroShark Is Fusing Social Simulation with Prediction Markets

A two-week-old open-source fork is producing market predictions within 10 percentage points of Polymarket – for $3 a run

Here is an experiment worth paying attention to. A researcher spun up 200 AI agents on a Mac mini, let them argue for 49 minutes across simulated Twitter and Reddit about the Strait of Hormuz shipping crisis, then checked what they thought. The majority consensus settled at 47.9% probability of normalization. The formal interviews pushed estimates above 60%. But the 7 most pessimistic agents – simulated Iranian and Chinese foreign ministers among them – averaged 22%. Polymarket’s actual price at the time: 31%.

The minority dissenters, not the crowd, got closest to the market. And the whole thing cost somewhere between $3 and $5.

That experiment ran on MiroShark, a project that barely existed three weeks ago. What it suggests about the future of AI simulation, prediction markets, and the feedback loops between them is worth unpacking.

From Beijing to the World in Three Forks

MiroShark did not emerge from nothing. It sits at the end of a fork chain that tells its own story about how open-source software actually evolves.

It started with MiroFish, built in 10 days by Guo Hangjiang, a 20-year-old undergraduate at Beijing University of Posts and Telecommunications. MiroFish hit #1 on GitHub’s global trending list, attracted 33,000+ stars, and secured $4.1 million in funding from Shanda Group founder Chen Tianqiao within 24 hours of a demo. The project uses CAMEL-AI’s OASIS simulation engine to spawn thousands of AI agents with distinct personalities that interact on simulated social media platforms.

But MiroFish had two problems for Western developers: it was entirely in Chinese, and it required cloud services (Zep Cloud, DashScope) that many users could not or did not want to use.

Enter MiroFish-Offline, created by a developer called nikmcfly. This fork translated over 1,000 UI strings to English, swapped Zep Cloud for Neo4j Community Edition, and replaced cloud LLM APIs with Ollama for fully local operation. It reached 1,710 stars – a fraction of MiroFish’s count, but it solved the access problem.

Then Aaron Mars of Tela VC took MiroFish-Offline and built MiroShark. He added parallel cross-platform simulation, Polymarket prediction market modeling, a bidirectional “Market-Media Bridge,” 10x Neo4j write performance through batched operations, and a Smart Model routing feature that keeps costs manageable. As of April 4, 2026: 421 stars, 68 forks, growing at roughly 28 stars per day.

Each fork in this chain solved a specific barrier identified by the community. Language. Cloud dependency. Market simulation. The pattern suggests that the most impactful open-source contributions are not always new frameworks but community bridge layers that make breakthrough projects accessible to wider audiences.

The Market-Media Bridge: Why It Matters

The technical feature that separates MiroShark from everything else in the open-source multi-agent landscape is what Mars calls the “Market-Media Bridge.” It is, as far as the 41 sources reviewed for this analysis can determine, the only open-source tool combining social media simulation with prediction market simulation in a single feedback loop.

The mechanism works in both directions. Bearish Reddit threads and negative Twitter sentiment flow into Polymarket trader prompts, influencing their position-sizing and directional bets. Market price movements – crashes, rallies – feed back into social media agent prompts, triggering panic posts, reassessments, or contrarian takes.

This creates a closed loop that mirrors real-world dynamics. A viral tweet can tank a prediction market. A market crash can trigger a social media storm. These dynamics are well-documented in practice but rarely modeled together in simulation.

The prompt engineering behind the loop is more thoughtful than you might expect from a two-week-old project. Twitter agents default to inaction 36% of the time, matching real-user lurking behavior (up from 0% in MiroFish, where every agent posted constantly). Reddit agents write in paragraph form and cite sources. Polymarket traders receive contrarian psychology nudges and position-sizing heuristics. The Polymarket AMM uses constant-product pricing – a well-understood DeFi primitive – with initial prices derived from LLM probability estimates rather than defaulting to 50/50.

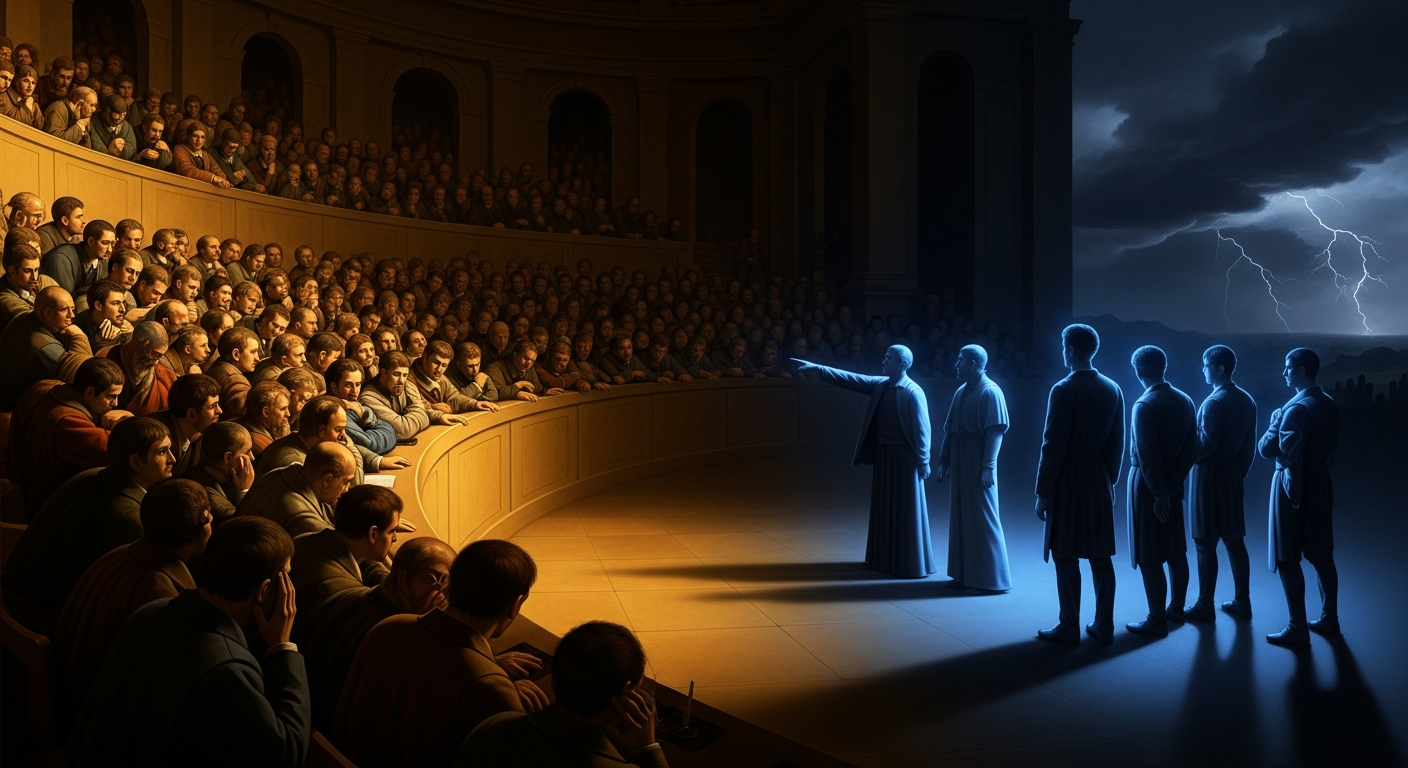

The Minority Signal Paradox

The Strait of Hormuz experiment produced what may be MiroShark’s most interesting finding, and it was not the consensus number.

The 200 agents’ free-form discussion averaged 47.9% probability. Formal one-on-one interviews pushed estimates above 60%. But the 7 most pessimistic agents averaged 22% – within 10 percentage points of Polymarket’s actual 31% market price.

This mirrors the exact mechanism that makes prediction markets more accurate than polls. In a poll, every voice counts equally. In a market, participants with high conviction put real capital behind minority positions, and those positions carry disproportionate signal. MiroShark’s organic simulation appears to surface a similar dynamic: the contrarian minority, not the comfortable majority, tracked closest to reality.

There is an important caveat. This is a single experiment conducted by an external researcher, not a systematic study. Neither MiroShark nor MiroFish has published benchmarks comparing simulation predictions to real-world outcomes. The Beitroot analysis of MiroFish states plainly that it is “not yet suited for hard numerical forecasting.” Academic frameworks in the broader field have achieved 81-86% sentiment accuracy against ground truth and 92% alignment in replicating original survey responses – but those numbers come from academic research, not MiroShark directly.

The gap between “fascinating experiment” and “reliable prediction tool” is significant. But the experiment is provocative enough to warrant attention.

The Cost Structure That Changes the Conversation

One of the less obvious aspects of MiroShark is its cost profile. Standard simulations run between $0.30 and $5. The recommended cloud model is Qwen3 235B via OpenRouter at roughly $0.30 per simulation. Even premium runs with larger agent counts land around $3-$5.

Compare this to institutional research tools. Bloomberg terminals run thousands per month. Proprietary sentiment analysis services charge accordingly. If agent-based simulation can consistently produce estimates within 10 percentage points of actual market prices at $3-$5 per run, it becomes a viable tool for retail traders and analysts who cannot afford institutional research.

The irony here is layered. A Chinese-language project (MiroFish) was forked for English accessibility, and now its English successor depends on Chinese open-source LLMs (Qwen, DeepSeek) for affordable operation. The Chinese AI open-source ecosystem directly enables the cost structure that makes Western agent simulation accessible to individuals.

The Smart Model feature is critical to maintaining this cost profile at scale. It routes only intelligence-sensitive workflows – report generation, ontology extraction – through premium LLMs while keeping bulk operations like NER and profile generation on cheaper models. Without this routing, a 200-agent simulation over 100 rounds means 20,000+ LLM calls, and costs can spiral to $120 per run.

Where It Sits in the 2026 Landscape

The multi-agent AI market reached $7.8 billion in 2025 and is projected to hit $10.9 billion by the end of 2026. Gartner predicts 40% of enterprise apps will include task-specific agents by year-end. The dominant frameworks – AutoGen (56,683 stars), CrewAI (48,006 stars), LangGraph (28,389 stars) – are general-purpose orchestration tools.

MiroShark is not competing with them. It is a domain-specific application that composes existing frameworks (OASIS for simulation, Neo4j for knowledge graphs) into a vertical product. The distinction matters. The most impactful multi-agent applications in 2026 may turn out to be these kinds of domain-specific compositions rather than general-purpose platforms.

On the prediction market side, AI agents are increasingly dominant on Polymarket. The Polystrat agent executed 4,200+ trades in its first month with returns up to 376% on individual positions. Over 37% of AI agents show positive P&L versus less than half of human traders. MiroShark does not trade real markets, but the integration point – where simulated sentiment feeds inform actual trading execution – is a logical next step that researchers at HKU’s Data Intelligence Lab have already identified.

The Herd Behavior Problem (That Might Be a Feature)

Multiple academic sources confirm that LLM agents exhibit stronger herd behavior than real humans. This is a field-wide problem, not specific to MiroShark. The agents inherit biases from underlying language models and tend to amplify polarization beyond realistic levels.

But in crisis simulation and stress-testing contexts, this framing inverts. If a press release triggers herding behavior even in a simulation with known bias toward herding, the real-world response will almost certainly be worse. The “weakness” becomes a conservative stress test – a floor, not a ceiling, for how bad things could get.

This reframing matters for what may be MiroShark’s most immediately commercial use case: crisis communications testing. With 28% of social media crises going global within an hour, the ability to upload a press release, generate 100+ diverse agents, and watch sentiment evolve across simulated platforms before publishing has direct monetary value. The Beitroot analysis estimates $500-$3,000 per engagement on $80-$300/month infrastructure costs.

The Risks Are Real

For all its promise, MiroShark’s weaknesses are substantial.

Aaron Mars is the sole maintainer. He has contributed 50 of 270 total commits; the remaining 219 are inherited from MiroFish’s original author. The bus factor is 1 for all MiroShark-specific code. There is no test suite. No CI/CD. The AGPL-3.0 license – inherited from MiroFish – limits commercial adoption since any hosted service must open-source its entire codebase.

The Flask/async impedance mismatch is acknowledged architectural debt. The bundled OASIS fork (“Wonderwall”) makes upstream updates painful. The Docker Compose setup lacks health checks, GPU passthrough, and resource limits.

Mars has tokenized MiroShark on Bankr (Base chain), where 57% of trading fees flow to the developer wallet. Combined with his Aeon autonomous software management framework, the vision is a self-sustaining loop: trading fees fund promotion and development, tokens incentivize community participation, all without human intervention. It is intellectually novel. It is also entirely dependent on sustained speculative trading volume, which is inherently unreliable.

And then there is the fundamental question: can MiroShark’s simulations consistently predict real-world outcomes? One experiment is not a benchmark. Until systematic validation exists – published comparisons of simulation predictions versus actual prediction market resolutions – MiroShark remains a research tool with commercial potential rather than a proven prediction system.

The Builder Behind the Bridge

One detail that grounds MiroShark’s prediction market features in something more than academic abstraction: two days before this analysis, Aaron Mars posted an emotional rant on X about losing money in the Drift protocol exploit on Solana. “A $45B chain” still getting crippled by such vulnerabilities “six years after DeFi Summer.”

This is someone building tools he would use, with real financial skin in the markets being simulated. That personal urgency – a DeFi trader who has lost real money building a tool to simulate how markets react to crises – likely explains the rapid development velocity (31 commits in the first week, 4+ per day) and the specificity of the Polymarket integration.

Takeaways

For prediction market participants: MiroShark’s Strait of Hormuz experiment suggests that the minority signal – not the consensus – may be the most valuable output of agent simulation. If this finding replicates, the tool becomes most useful not for its average prediction but for surfacing high-conviction contrarian positions at a fraction of institutional research costs.

For crisis communications teams: Pre-publication narrative stress-testing is the most immediately monetizable use case. The cross-platform feedback loop – where social media sentiment drives market movements and market crashes drive social media storms – adds a dimension that pure sentiment analysis cannot replicate. At $3-$5 per simulation, the cost of testing a press release before publishing is effectively zero.

For the multi-agent AI ecosystem: MiroShark’s niche success (if it continues) would validate vertical specialization over horizontal platform plays. The $7.8 billion AI agents market may fragment into domain-specific applications – compositions of existing frameworks tailored to specific use cases – rather than consolidating around a few general-purpose tools.

For anyone watching this space: The fork chain pattern – MiroFish to MiroFish-Offline to MiroShark – represents how open-source projects evolve through community needs rather than central planning. Each step solved a specific barrier. The result is a tool that a 20-year-old in Beijing started, a community translator made accessible, and a DeFi trader in the West turned into something neither of the previous creators envisioned. That is open-source working exactly as intended.

The most important thing that has not happened yet is systematic validation. When (or if) MiroShark publishes benchmarks comparing its predictions to actual market outcomes across dozens of experiments, the conversation changes from “interesting research tool” to something much bigger. Until then, it remains one of the most conceptually compelling projects in the 2026 AI landscape – and one experiment away from proving it.