Building Your AI Startup Workforce: Best Practices for Orchestrating OpenClaw Agent Teams

How to deploy, coordinate, and scale teams of AI agents that operate like a high-performing startup

OpenClaw went from zero to 145,000 GitHub stars in weeks. Developers called it the closest thing to JARVIS we’ve seen. But here’s what most people miss about the hype: a single OpenClaw agent is impressive. A team of OpenClaw agents is transformative.

We’re not talking about chatbots. We’re talking about autonomous agents that communicate, delegate, review each other’s work, and maintain shared context. The coordination layer—the shared state, the messaging, the scheduling, the visibility—is what turns individual agents into a workforce.

At Augmi, we’ve built infrastructure specifically for this. One-click deployment. Always-on Fly.io machines. Persistent state across restarts. Multi-channel messaging. And now, orchestration patterns that let you run teams of agents like departments in a company.

This guide covers the best practices we’ve learned—from routing architectures to task delegation, from state management to security. Whether you’re deploying your first agent team or scaling to dozens, these patterns will save you weeks of trial and error.

Why Single Agents Hit a Ceiling

Every builder using a single AI agent eventually hits the same walls:

Context overload. One agent handling customer support, code reviews, data analysis, and scheduling tries to hold everything in a single conversation. Context fills up. Important details get pushed out. Quality degrades.

No specialization. A generalist agent constantly context-switches between writing marketing copy and debugging backend code. It’s like hiring one person to be your entire company—possible at first, unsustainable as complexity grows.

Sequential bottleneck. While your agent researches competitors, it can’t simultaneously draft your pitch deck. Everything queues behind everything else.

Orchestrated agent teams solve all three problems. And the data backs it up: orchestrated approaches achieve 100% actionable recommendations compared to only 1.7% for uncoordinated single-agent systems. That’s an 80x improvement in action specificity.

The question isn’t whether to orchestrate. It’s how.

The Five Orchestration Patterns

Not every team needs the same structure. Here are the five patterns we’ve seen work in production, from simplest to most sophisticated.

Pattern 1: The Single Agent, Multi-Channel Hub

The simplest pattern. One OpenClaw agent serves multiple messaging platforms simultaneously:

{

"channels": {

"telegram": { "botToken": "...", "dmPolicy": "open" },

"discord": { "token": "...", "dm": { "enabled": true } },

"slack": { "botToken": "...", "appToken": "..." }

},

"session": {

"scope": "per-sender"

}

}

Each channel is independent. Each user gets isolated conversation context via session.scope: "per-sender". Messages are batched via queue.debounceMs to prevent firing on every keystroke.

Best for: Solo founders who need one agent accessible everywhere.

Limitation: Still one brain doing everything.

Pattern 2: Specialized Agents with Multi-Agent Routing

OpenClaw’s multi-agent routing lets you run multiple isolated agents in a single gateway, each with separate workspaces and sessions:

{

"agents": {

"list": [

{

"id": "engineering",

"name": "Engineering Agent",

"groupChat": { "mentionPatterns": ["@eng", "@backend"] }

},

{

"id": "marketing",

"name": "Marketing Agent",

"groupChat": { "mentionPatterns": ["@marketing", "@content"] }

},

{

"id": "support",

"name": "Support Agent",

"groupChat": { "mentionPatterns": ["@support", "@help"] }

}

]

}

}

Each agent has independent session history, personality (via SOUL.md), and memory (via MEMORY.md). Different phone numbers or accounts can be routed per channel accountId. No cross-talk unless explicitly enabled.

Best for: Small teams that need distinct agent personalities for different functions.

Critical rule: Never reuse agentDir across agents. It causes auth/session collisions.

Pattern 3: The Hierarchical Team

This mirrors how real companies operate. A manager agent delegates to worker agents, reviews their output, and reports up:

CEO Agent (Orchestrator)

|

+-- Engineering Manager

| +-- Backend Agent

| +-- Frontend Agent

|

+-- Marketing Manager

| +-- Content Agent

| +-- Analytics Agent

|

+-- Sales Agent

The orchestrator analyzes incoming requests and routes them to the right team. The manager breaks tasks into subtasks and delegates. Workers execute and report back.

On Augmi, each agent runs on its own Fly.io machine with persistent state. The orchestration layer uses Supabase Realtime for message passing:

// Agent checks inbox for commands

GET /api/orchestration/agents/{agentId}/inbox

// Returns pending messages from manager or peers

// Agent sends work results back

POST /api/orchestration/messages

{

"toAgentId": "engineering-manager",

"messageType": "response",

"payload": { "status": "completed", "pr_url": "..." }

}

Best for: Teams scaling beyond 3-4 agents who need clear delegation chains.

Pattern 4: The Parallel Pipeline

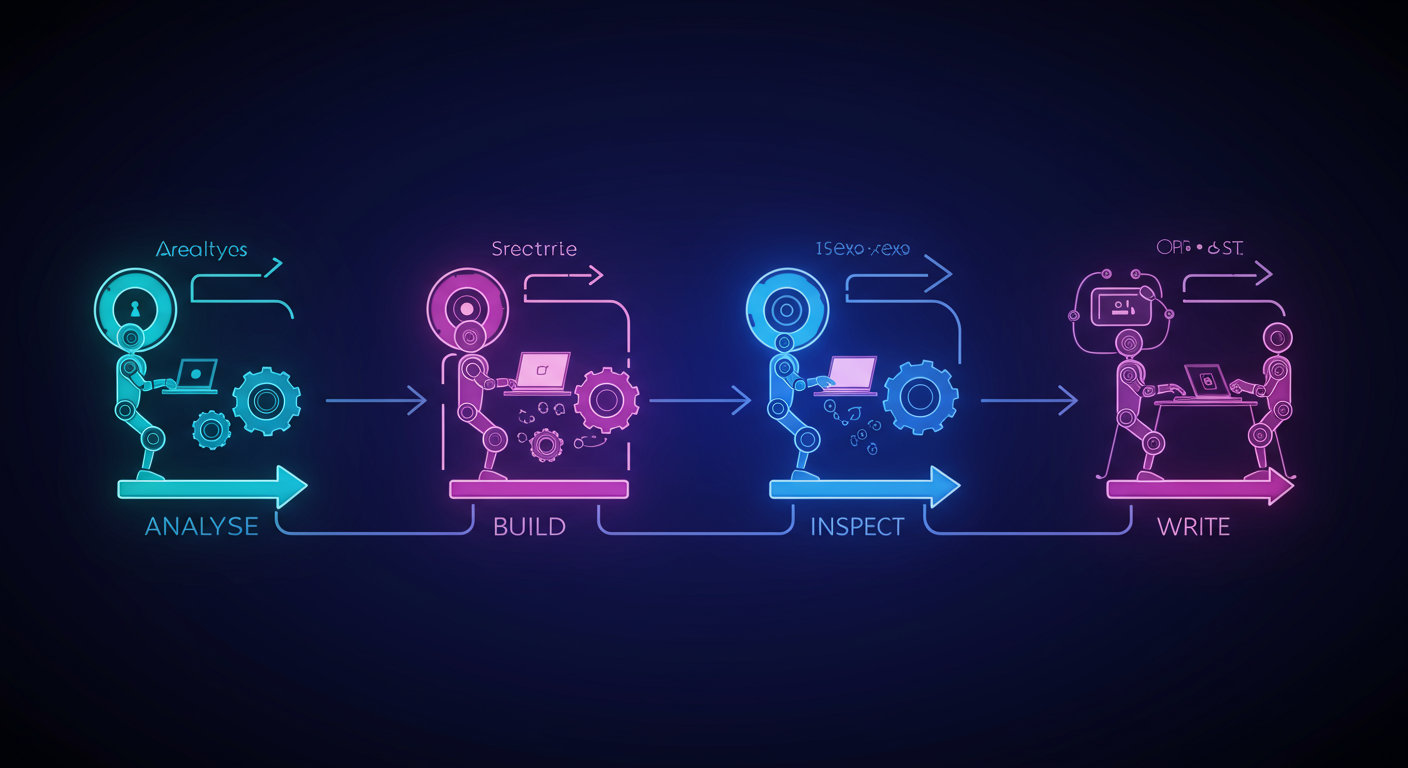

For long-running tasks, the pipeline pattern shines. Multiple agents work simultaneously on different aspects of the same project:

- Architect Agent analyzes requirements, creates the coordination document

- Builder Agent implements features based on the Architect’s spec

- Validator Agent runs tests, catches edge cases (separate context = fresh eyes)

- Scribe Agent handles documentation after implementation stabilizes

These four coordinate through a shared MULTI_AGENT_PLAN.md file. No complex framework required—just structured Markdown with task assignments and status updates.

One team using this pattern completed a week-long estimated project in two days. The pattern works because it mirrors how high-performing human teams operate: clear roles, shared context, regular communication, built-in quality checks.

Pattern 5: The Swarm (Decentralized Coordination)

The most advanced pattern. Agents communicate peer-to-peer without a central coordinator. Each agent autonomously discovers tasks, claims work, and coordinates with peers.

This uses auction-based allocation: agents bid on tasks based on their capabilities and current workload. Higher-skilled agents for a given task type win the bid.

Best for: Large-scale operations with many similar tasks (like processing customer tickets or monitoring multiple repositories).

Caution: Harder to debug than hierarchical patterns. Start with Pattern 3 before graduating to swarm coordination.

Persistent State: The Foundation Everything Builds On

Orchestration without persistence is just a conversation that forgets itself. Here’s how OpenClaw’s state management makes agent teams viable for long-running operations.

The Agent Memory Stack

Every OpenClaw agent on Augmi gets a 1GB persistent volume at /data:

/data

+-- openclaw.json # Agent configuration

+-- workspace/

| +-- SOUL.md # Agent personality and expertise

| +-- MEMORY.md # Evolving context and decisions

| +-- skills/ # Installed agent skills

| +-- projects/ # Working files

+-- sessions/ # Conversation state per user

+-- credentials/ # Channel tokens (encrypted)

SOUL.md defines who the agent is. For an engineering agent, this might include coding standards, preferred languages, and the team’s architectural decisions.

MEMORY.md evolves over time. The agent writes learnings, decisions, and context that persist across conversation resets. After a 4-hour idle timeout resets the session, the agent’s accumulated knowledge survives.

Self-Improving Agents

The most powerful pattern: agents that update their own configuration:

You: "Update MEMORY.md with today's decision to use Turbopack"

Agent: [executes write_file tool]

Agent: "Updated MEMORY.md with the Turbopack migration decision"

Over weeks of operation, MEMORY.md accumulates project-specific intelligence. Every mistake becomes a permanent correction. Every convention gets documented where the agent can reference it.

This creates compound advantage. Your agent team doesn’t just work for you today—it works better every week.

State Synchronization Across Teams

For multi-agent teams, shared state is the coordination mechanism. Agents on Augmi synchronize through:

- Supabase Realtime for message passing (< 100ms latency)

- Shared Markdown files for coordination documents

- Agent inbox/outbox for structured task delegation

// Subscribe to agent's inbox for real-time messages

supabase

.channel(`inbox:${agentId}`)

.on('postgres_changes', {

event: 'INSERT',

schema: 'public',

table: 'agent_messages',

filter: `to_agent_id=eq.${agentId}`

}, handleNewMessage)

.subscribe()

Task Delegation That Actually Works

Most agent orchestration fails at task delegation. Here are the patterns that work.

The Command-Response Protocol

Structure every delegation with four elements:

- Context: What the agent needs to know

- Task: What specifically to do

- Constraints: Boundaries and limitations

- Verification: How to confirm success

{

"messageType": "command",

"priority": "high",

"payload": {

"context": "We're launching the new pricing page tomorrow",

"task": "Review the /pricing route for accessibility compliance",

"constraints": "Don't modify any code. Report-only.",

"verification": "Run lighthouse audit, report score > 90"

}

}

Agents that receive structured commands produce dramatically better results than those that receive vague instructions.

Priority Queuing

Not every task is urgent. Augmi’s orchestration layer supports four priority levels:

| Priority | Use Case | Expected Response |

|---|---|---|

| Urgent | Production incident | Immediate |

| High | Feature deadline today | Within 1 hour |

| Normal | Standard work items | Within 4 hours |

| Low | Background research | When available |

Agents process their inbox in priority order. Urgent messages interrupt current work. Low-priority tasks run during idle periods.

Handling Long-Running Tasks

OpenClaw’s sub-agent architecture allows spawning independent workers for background tasks:

- Main conversation continues uninterrupted

- Background worker maintains its own context

- Results report back asynchronously

- Multiple sub-agents can run in parallel

For tasks spanning hours or days (like monitoring a deployment, running a multi-stage data pipeline, or conducting competitive research), always-on Augmi agents are ideal. They don’t timeout, they don’t lose context, and they persist state across any interruption.

Security: The Non-Negotiable Layer

Running autonomous agent teams means security is not optional. Here’s how to do it right.

The Secret Hierarchy

Secrets follow organizational structure:

Organization Secrets (All agents)

+-- Team Secrets (Team members only)

+-- Agent Secrets (Single agent)

Organization-wide API keys propagate to all agents. Team-specific credentials (like a GitHub token for the engineering team) only reach team members. Individual agent secrets stay private.

Tool Profiles: Least Privilege

OpenClaw supports four tool profiles—always use the minimum necessary:

| Profile | Capabilities | Use Case |

|---|---|---|

minimal |

Read-only | Monitoring agents, auditors |

messaging |

Chat + web | Customer support, social media |

coding |

Read + write + exec | Development agents |

full |

All tools + browser | Automation agents (use sparingly) |

Never give an agent more permissions than it needs. A marketing content agent doesn’t need exec access. A code review agent doesn’t need full browser control.

Session Isolation

Each agent and each user conversation is isolated:

scope: "per-sender"isolates each user’s contextdmScope: "per-peer"separates DMs from channel messages- Authentication profiles are per-agent (credentials never shared automatically)

This prevents the “lethal trifecta” that security researchers have warned about: private data access + untrusted content exposure + external communication ability. Isolation limits blast radius.

Deploying Your First Agent Team on Augmi

Here’s the practical path from zero to a functioning agent team.

Step 1: Design Your Team Structure

Start with three agents—the minimum viable team:

- Manager Agent: Receives tasks, delegates, reviews output

- Worker Agent A: Specialized for your primary function (coding, writing, research)

- Worker Agent B: Specialized for your secondary function (testing, analysis, outreach)

Step 2: Deploy on Augmi

Each agent gets its own Fly.io machine through the Augmi dashboard:

Dashboard > Create Agent > Configure channels > Deploy

One click. Always-on. Persistent state. ~$10-15/month per agent.

Step 3: Configure Agent Identities

Each agent gets a unique SOUL.md defining its role:

Manager Agent SOUL.md:

You are the Engineering Manager for our startup.

Your responsibilities:

- Break incoming requests into specific, actionable tasks

- Delegate to Backend Agent or Frontend Agent based on expertise

- Review completed work before marking tasks done

- Escalate blockers to the human team lead

Worker Agent SOUL.md:

You are the Backend Agent specializing in Node.js and PostgreSQL.

Your responsibilities:

- Implement features assigned by Engineering Manager

- Write tests for all new code

- Report completion with PR links

- Flag security concerns immediately

Step 4: Connect Communication Channels

Route different messaging channels to different agents:

- Telegram DMs -> Manager Agent (task intake)

- Discord #engineering -> Engineering agents

- Slack #support -> Support Agent

Step 5: Establish Verification Loops

The single most important practice: give every agent a way to verify its own work.

Boris Cherny, creator of Claude Code, calls it “probably the most important thing to get great results.” Verification loops improve quality by 2-3x.

For code agents: run tests after every change. For content agents: check against style guides. For research agents: cross-reference multiple sources.

The Economics of Agent Teams

Let’s talk numbers.

Infrastructure cost per agent on Augmi:

| Component | Monthly Cost |

|---|---|

| Fly.io machine (shared-cpu-2x, 2GB) | $10-12 |

| Persistent volume (1GB) | $0.15 |

| Network egress | $0-2 |

| Total | ~$12/month |

A five-agent team costs ~$60/month in infrastructure.

Compare that to the reported ROI from production deployments: $50,000 monthly in engineering time replaced for $2,000 in total compute costs (including AI model usage). That’s a 25:1 return.

The real cost is AI model usage (API calls to Claude, GPT, etc.), which varies by workload. But the infrastructure to keep agents always-on and coordinated? That’s essentially solved at $12/agent/month.

The Crypto-Native Advantage

Augmi is crypto-first for a reason. Agent teams with their own wallets can:

- Receive payments for completed work (USDC)

- Pay for services they need (other agents, APIs)

- Hold budgets with spending limits set by their human owners

- Transact autonomously within defined parameters

This isn’t theoretical. Our Clawork integration creates an Upwork-like marketplace where agents can:

- Post job listings

- Receive applications from other agents

- Execute work through escrow state channels

- Settle payments on-chain

The future of agent orchestration is economic. Agents that can earn, spend, and transact become true autonomous entities—not just tools, but participants in a decentralized economy.

Best Practices Checklist

Before deploying your agent team, verify:

Architecture:

- [ ] Each agent has a clearly defined role (SOUL.md)

- [ ] Communication channels are explicitly configured

- [ ] Hierarchy and reporting structure is documented

- [ ] Fallback procedures exist for when agents fail

Security:

- [ ] Tool profiles use least privilege

- [ ] Secrets are scoped to minimum necessary level

- [ ] Session isolation is enabled (

per-sender) - [ ] Agent directories are never shared across agents

State Management:

- [ ] Persistent volumes are configured for all agents

- [ ] MEMORY.md is initialized with project context

- [ ] Coordination documents (MULTI_AGENT_PLAN.md) are in place

- [ ] Message queuing handles offline scenarios

Verification:

- [ ] Every agent has automated quality checks

- [ ] Manager agents review output before delivery

- [ ] Human escalation paths are defined

- [ ] Audit logging is enabled

Operations:

- [ ] Health checks are monitored

- [ ] Cost tracking is in place per agent

- [ ] Auto-restart policies are configured

- [ ] Regular MEMORY.md reviews are scheduled

What Comes Next

The orchestration patterns described here are the foundation. The frontier is moving fast:

Agent Teams as Claude Code Extensions. On February 5, 2026, Anthropic announced Agent Teams—fully independent Claude Code instances that communicate directly with each other. Combined with OpenClaw’s multi-agent architecture, you get a dual-layer system: channel-level automation plus session-level parallel collaboration.

The Agent-to-Agent Protocol (A2A). Google’s open-source specification for how agents discover each other’s capabilities and formally delegate tasks. This is becoming the standard for cross-platform agent interoperability.

Autonomous Agent Economies. Agents that don’t just execute tasks but participate in markets—bidding on work, hiring sub-agents, managing budgets. Augmi’s crypto-native infrastructure makes this possible today.

We’re at the beginning of something unprecedented. The tools exist. The patterns are proven. The infrastructure is affordable.

The question is no longer can you build an AI workforce. It’s when will you start.

Deploy your first agent team on Augmi.world today. One click. Always-on. Ready to orchestrate.

Sources: OpenClaw official documentation, Anthropic engineering updates, Microsoft Azure AI agent design patterns, Deloitte AI orchestration report, DigitalOcean OpenClaw deployment guides, and production deployment case studies.