Orchestrating Claude Code with OpenClaw: The Best Libraries and How to Choose

Your AI Agents Need a Conductor

You’ve got Claude Code humming along—reviewing PRs, writing tests, refactoring modules. But what happens when one agent isn’t enough? What if you need five Claude instances investigating a bug from different angles, or a fleet of agents building a full-stack feature in parallel?

That’s the orchestration problem. And in early 2026, two forces are converging to solve it: OpenClaw, the open-source AI agent framework that gained 157,000 GitHub stars in 60 days, and Claude Code’s rapidly maturing multi-agent capabilities.

After analyzing 40+ sources—from Anthropic’s internal engineering papers to security audits by CrowdStrike and Cisco—here’s what actually works, what’s hype, and which library you should pick.

What OpenClaw Actually Is (And Isn’t)

First, let’s clear up a common misconception. OpenClaw is not an AI model. It’s server software that routes information between external services and AI models of your choice—Claude, GPT, Gemini, or others.

Created by Peter Steinberger and formerly known as Moltbot, then Clawdbot, OpenClaw uses a plugin architecture called “skills” to connect to anything: email, calendars, databases, APIs, browsers. Think of it as middleware for AI agents.

The critical insight: 65% of OpenClaw skills are now wrappers around MCP (Model Context Protocol) servers. This matters enormously for orchestration, because MCP is also the protocol that Claude Code uses natively. Same interface, different entry points.

The Three Tiers of Claude Code Orchestration

Every approach to multi-agent Claude Code—whether through OpenClaw or standalone—falls into one of three tiers:

Tier 1: Subagents (2-5 Agents)

The Claude Agent SDK’s native subagent system. Each subagent runs in its own isolated context, executes a focused task, and returns summarized results to the parent agent.

from claude_agent_sdk import query, ClaudeAgentOptions, AgentDefinition

async for message in query(

prompt="Review authentication module comprehensively",

options=ClaudeAgentOptions(

allowed_tools=["Read", "Grep", "Glob", "Task"],

agents={

"security-scanner": AgentDefinition(

description="Security vulnerability specialist",

tools=["Read", "Grep", "Glob"],

model="sonnet"

),

"performance-reviewer": AgentDefinition(

description="Performance and optimization analyst",

tools=["Read", "Grep", "Glob"],

model="sonnet"

)

}

)

):

print(message.result)

Token cost: Low. Results are summarized back. Best for: Code review, testing, focused analysis—tasks with clear inputs and outputs.

Tier 2: Agent Teams (5-15 Agents)

The experimental but functional multi-instance system in Claude Code. Unlike subagents, each teammate is a fully independent Claude Code session with its own context window.

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}

Then just ask: “Create an agent team: one teammate on the API layer, one on the frontend components, one writing integration tests.”

The system handles task dependency management, peer-to-peer messaging between agents, and file-locking to prevent race conditions.

Token cost: High. Each teammate is a separate instance. Best for: Complex collaborative work—debugging competing hypotheses, parallel feature development, research from multiple angles.

Tier 3: Swarm Intelligence (15+ Agents)

Enterprise frameworks like claude-flow that add consensus protocols (Raft, Byzantine Fault Tolerant), self-learning architectures, and hierarchical queen-worker topologies. The trade-off: dramatic complexity for dramatic capability.

Token cost: Very high, but with optimization (30-50% cost reduction claimed via WASM transforms). Best for: Large-scale enterprise orchestration, autonomous agent swarms, production systems requiring fault tolerance.

Where OpenClaw Fits In

OpenClaw’s architecture maps naturally to these tiers through MCP:

As a skill provider: OpenClaw skills become tools available to any Claude Code instance. Your orchestrated agents can use OpenClaw’s integrations (email, calendar, Slack, databases) through MCP without knowing OpenClaw exists.

As an orchestration layer: OpenClaw’s own agent loop can coordinate multiple Claude instances by passing context between skills and models. Its “heartbeat” system keeps agents running persistently.

As a community hub: OpenClaw’s 338+ contributors have built skills for nearly every integration you’d need. Rather than building MCP servers from scratch, you can often find an OpenClaw skill that wraps the service you need.

The practical architecture looks like this:

┌──────────────────────────────────────┐

│ Claude Agent SDK │

│ (Orchestration + Subagents) │

├──────────────────────────────────────┤

│ Model Context Protocol │

│ (Universal Interface) │

├──────────────┬───────────────────────┤

│ OpenClaw │ Native MCP Servers │

│ Skills │ (Playwright, GitHub, │

│ (Email, │ Postgres, Slack...) │

│ Calendar, │ │

│ Custom...) │ │

└──────────────┴───────────────────────┘

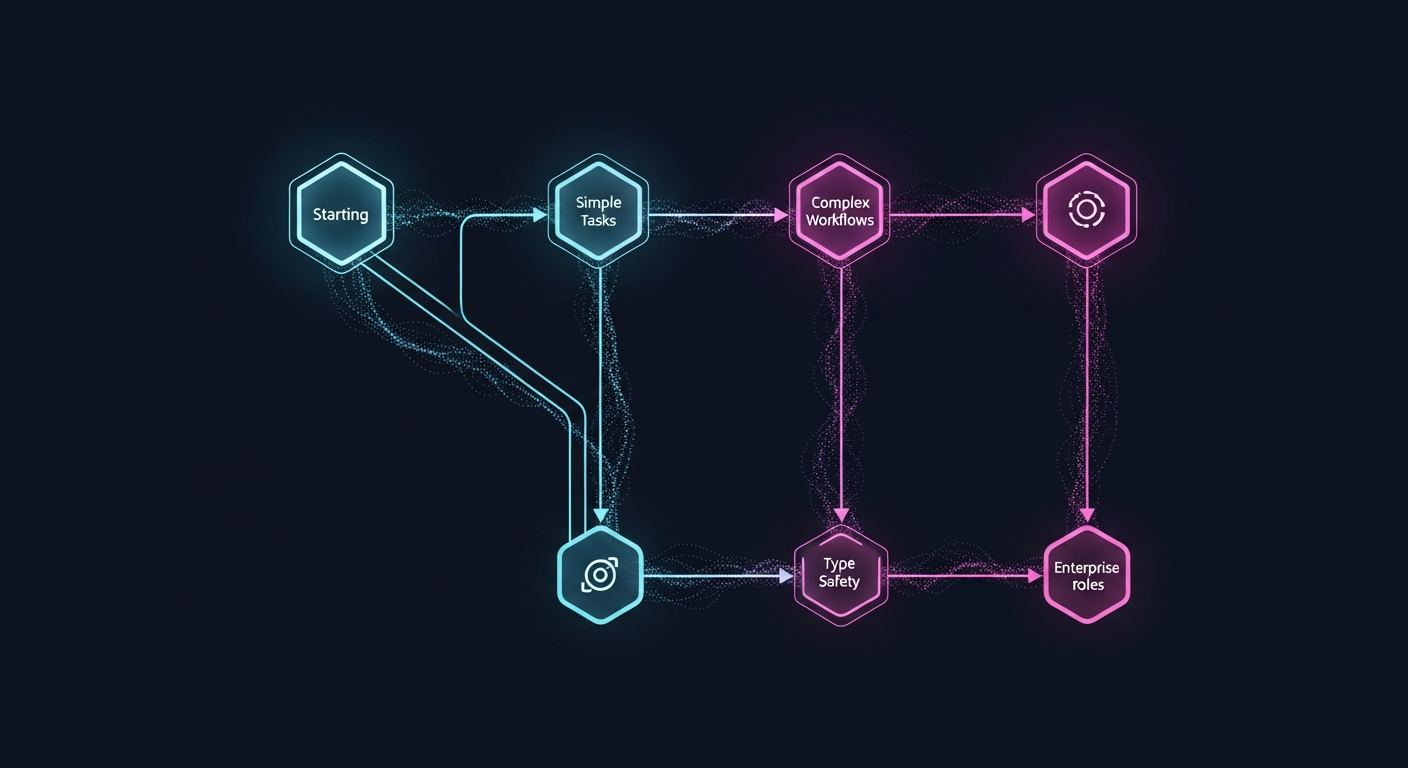

The Best Libraries: A Decision Framework

After analyzing 12+ frameworks, here’s the practical recommendation:

For Most Projects: Claude Agent SDK + MCP

Why: It’s Anthropic’s official solution, powers Claude Code itself, and has native MCP integration. You get subagent parallelization out of the box with the best security posture.

When to pick it: You’re building on Claude, you want production reliability, and you care about token efficiency.

How it works with OpenClaw: Configure OpenClaw MCP servers in the SDK’s mcpServers parameter. Your agents get OpenClaw’s skills as native tools.

For Complex Workflows: LangGraph

Why: Graph-based orchestration with mathematical guarantees for workflow execution. Explicitly supports Claude via ChatAnthropic. Benchmarked as the fastest framework with lowest latency.

When to pick it: You need conditional branching, complex state machines, or want to wrap Claude SDK calls in a visual workflow graph.

Hybrid pattern: Each LangGraph node calls the Claude Agent SDK for execution, combining LangGraph’s workflow control with Claude’s native agent capabilities. This pattern is documented by the community.

For Type-Safe Production: PydanticAI

Why: Built by the Pydantic team (creators of FastAPI), achieved production/stable status in February 2026. Brings “that FastAPI feeling” to agent development with strong type validation, structured outputs, and durable execution.

When to pick it: You want compile-time safety for agent outputs, you’re a FastAPI shop, or you need durable execution that survives failures.

For Enterprise Role-Based Teams: CrewAI

Why: Used by 60% of U.S. Fortune 500, processing 60M+ agent executions monthly. Natural role-based metaphors map well to team structures.

When to pick it: You think in terms of roles and responsibilities, you want established enterprise support, and you’re okay with Python-only.

What to Avoid

- OpenAI Swarm: Deprecated. Replaced by OpenAI Agents SDK. Also OpenAI-only.

- ControlFlow: Archived. Merged into Marvin framework.

- AutoGen: Claude support unclear. Microsoft-centric.

The Security Question Nobody Wants to Discuss

Here’s the uncomfortable truth: OpenClaw’s security posture is not production-ready for orchestration.

CrowdStrike, Cisco, Kaspersky, and Trend Micro independently flagged the same issues:

- Unsandboxed skill execution — malicious skills can achieve remote code execution

- Prompt injection via skills — crafted emails/web pages tricked agents into exfiltrating SSH keys

- 30,000+ exposed instances found by Bitsight researchers

- 404 Media reported an unsecured database allowing anyone to commandeer any agent

This doesn’t mean you can’t use OpenClaw. It means:

- Run OpenClaw in Docker for container-level sandboxing

- Never expose OpenClaw to the public internet without authentication

- Audit every skill before installation

- Use Claude Code’s native sandboxing as the primary execution environment, with OpenClaw as a tool provider only

Claude Agent SDK’s sandboxed architecture provides a much safer orchestration foundation. Use OpenClaw for its community skills and integrations, but let Claude Code handle the execution and coordination.

Practical Getting-Started Guide

Here’s how to set up a basic orchestration stack:

Step 1: Install Claude Agent SDK

npm install @anthropic-ai/claude-agent-sdk

# or

pip install claude-agent-sdk

Step 2: Set up OpenClaw for skills (Docker)

docker run -d --name openclaw \

-v openclaw-data:/data \

-p 3000:3000 \

ghcr.io/openclaw/openclaw:latest

Step 3: Connect via MCP

import { query } from "@anthropic-ai/claude-agent-sdk";

for await (const message of query({

prompt: "Research the latest trends and draft a summary email",

options: {

mcpServers: {

openclaw: {

command: "npx",

args: ["@openclaw/mcp-server"]

},

playwright: {

command: "npx",

args: ["@playwright/mcp@latest"]

}

}

}

})) {

console.log(message.result);

}

Step 4: Enable Agent Teams (optional) Add to your Claude Code settings:

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}

The Expert’s Rule of Thumb

Addy Osmani, who leads engineering at Google Chrome, offers the clearest heuristic for agent orchestration:

“80% planning and review, 20% execution.”

And Anthropic’s own engineering team confirms: the value isn’t in running more agents—it’s in decomposing problems into structures that agents can execute. Start small. Prove the pattern with 2-3 subagents on code review. Then scale to teams for complex features. Only reach for swarm intelligence when you’ve outgrown simpler patterns.

The future of AI agent orchestration isn’t picking the “best” framework. It’s composing MCP-compatible tools through thin orchestration layers. OpenClaw provides the skills. Claude Agent SDK provides the brains. MCP provides the glue. The library you choose is just the conductor’s baton—what matters is the score you write.

Sources

- Anthropic: How we built our multi-agent research system

- Anthropic: Building agents with the Claude Agent SDK

- Claude Code Agent Teams Documentation

- OpenClaw GitHub Repository

- OpenClaw Official Documentation

- Addy Osmani: Claude Code Agent Teams

- CrowdStrike: What Security Teams Need to Know About OpenClaw

- LangGraph + Claude SDK Integration

- claude-flow: Agent Orchestration Platform

- PydanticAI Documentation

- CrewAI: Multi-Agent Platform

- Model Context Protocol

- Claude Code Swarm Orchestration Skill

- Claude Code’s Hidden Multi-Agent System