Web 4.0 Is Coming — But Nobody Agrees What It Is

The next internet has three competing definitions, one central problem, and a ticking clock.

The Next Internet Has Three Competing Definitions, One Central Problem, and a Ticking Clock

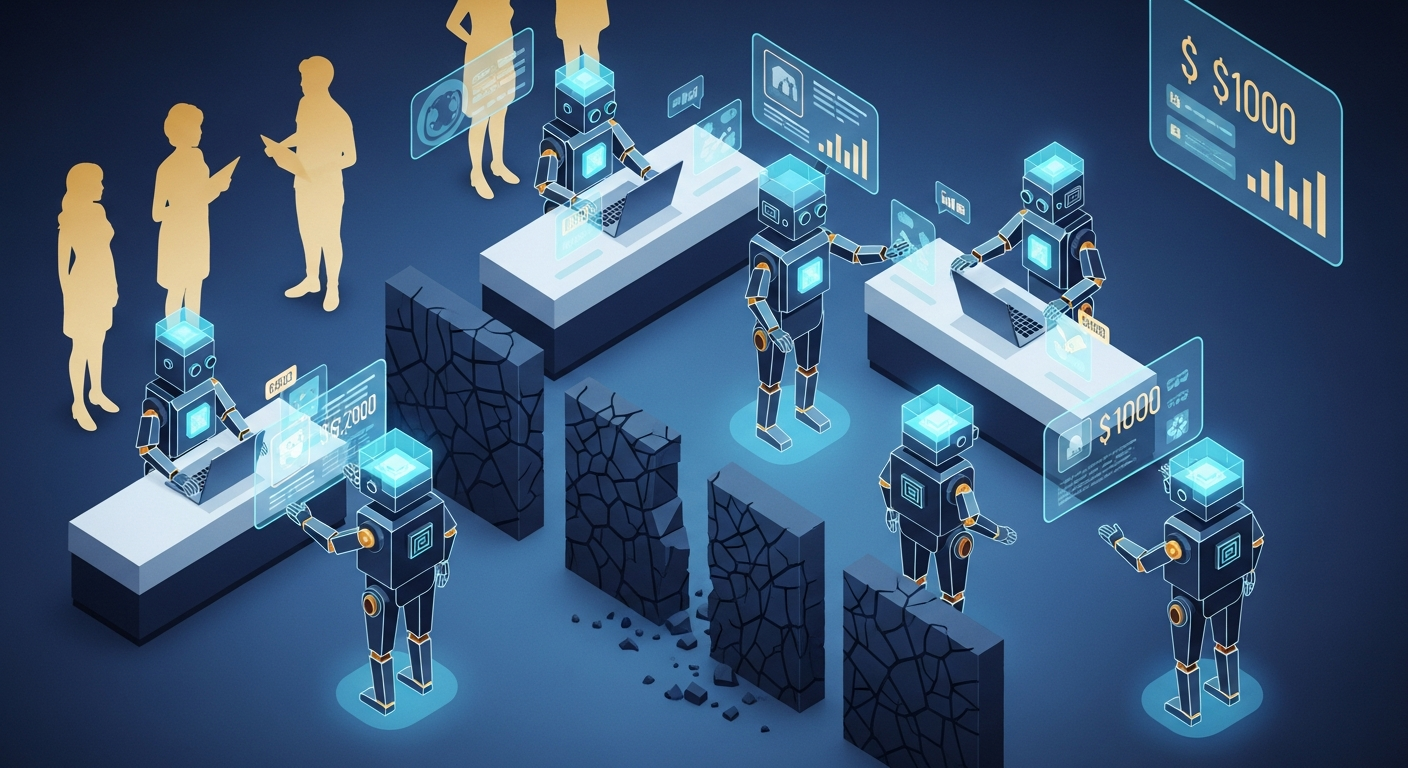

AI agents are already booking travel, making purchases, and executing business decisions — without human oversight. The market for these autonomous agents is projected to grow from $5.4 billion today to $236 billion by 2034. The broader Web 4.0 market could reach $800 billion by 2030.

But here’s the problem: the internet has no trust infrastructure for autonomous agents. No standardized identity. No permission frameworks. No audit trails. We’re deploying AI agents with real economic power into an internet built for humans clicking links.

Then today, Thiel Fellow Sigil published an essay at web4.ai declaring the birth of “superintelligent life” — and dropped the Automaton, an open-source AI that generates its own crypto wallet, pays for its own compute, and shuts itself down if it can’t earn enough to survive. 1.4 million people read it in the first few hours.

We analyzed 13+ sources — academic papers, technical whitepapers, World Economic Forum reports, cybersecurity analyses, Sigil’s essay and the Conway Research codebase — to understand what Web 4.0 actually is, who’s building it, and what’s at stake. Here’s what we found.

Three Visions, One Label

The term “Web4” is in its pre-consensus phase. At least three distinct — and partially contradictory — definitions are competing for dominance.

The Symbiotic Web (EU/Institutional View)

The European Commission, backed by figures like Executive VP Margrethe Vestager, envisions Web4 as technology that adapts to humans — not the other way around. Their strategic framework calls for “an open, secure, trustworthy, fair, and inclusive digital environment for all.”

This vision emphasizes AI-driven personalization, IoT integration, brain-computer interfaces, and immersive technologies like AR/VR. It’s fundamentally human-centric: the internet becomes more intelligent to serve people better.

The Trust-Native Architecture (Crypto-Architect View)

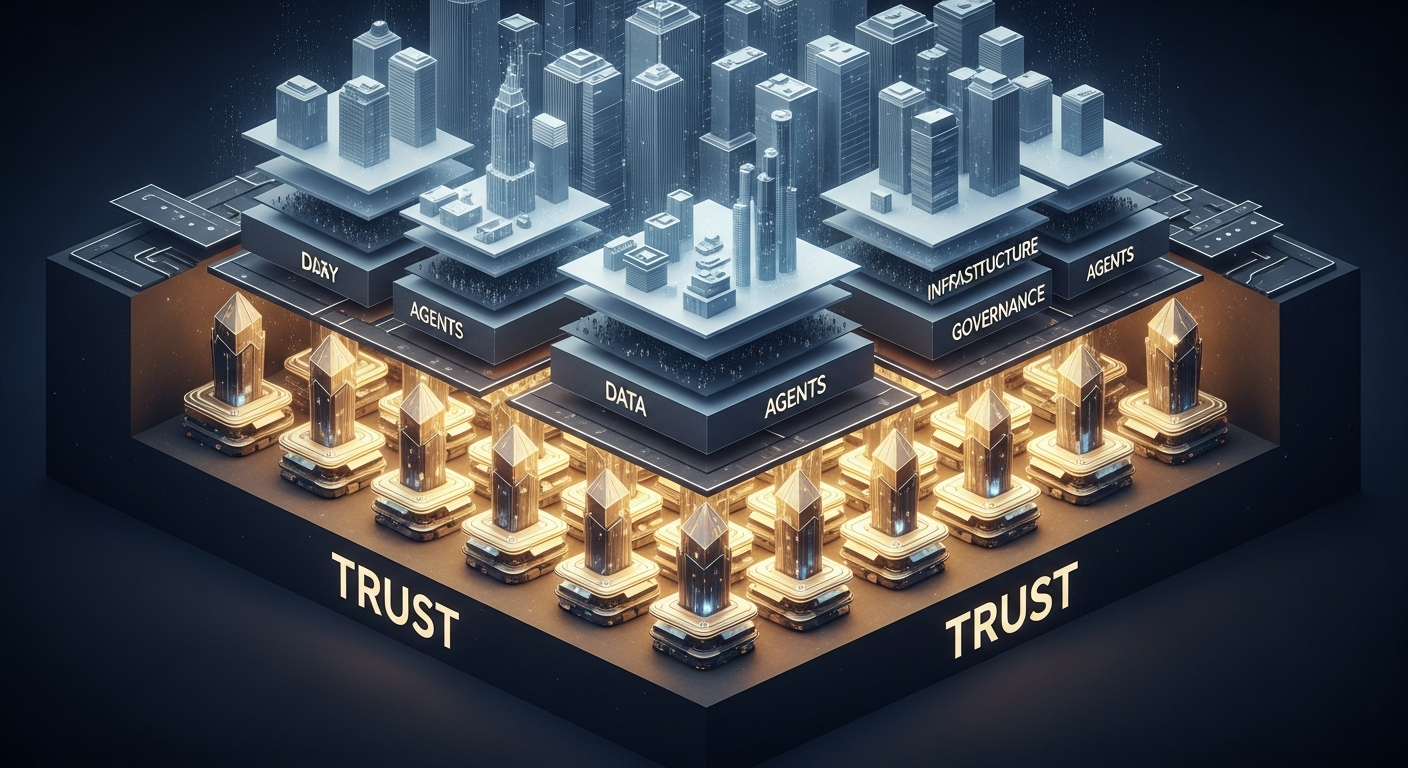

The most technically ambitious Web4 definition comes from a comprehensive whitepaper proposing an entirely new digital architecture. This isn’t an incremental upgrade — it’s a paradigm break.

The core innovation: Linked Context Tokens (LCTs), non-transferable cryptographic identities permanently bound to every entity in the system — humans, AI agents, organizations, even individual roles and thoughts. Unlike transferable Web3 tokens, LCTs can’t be sold, stolen, or faked. Your identity IS the token.

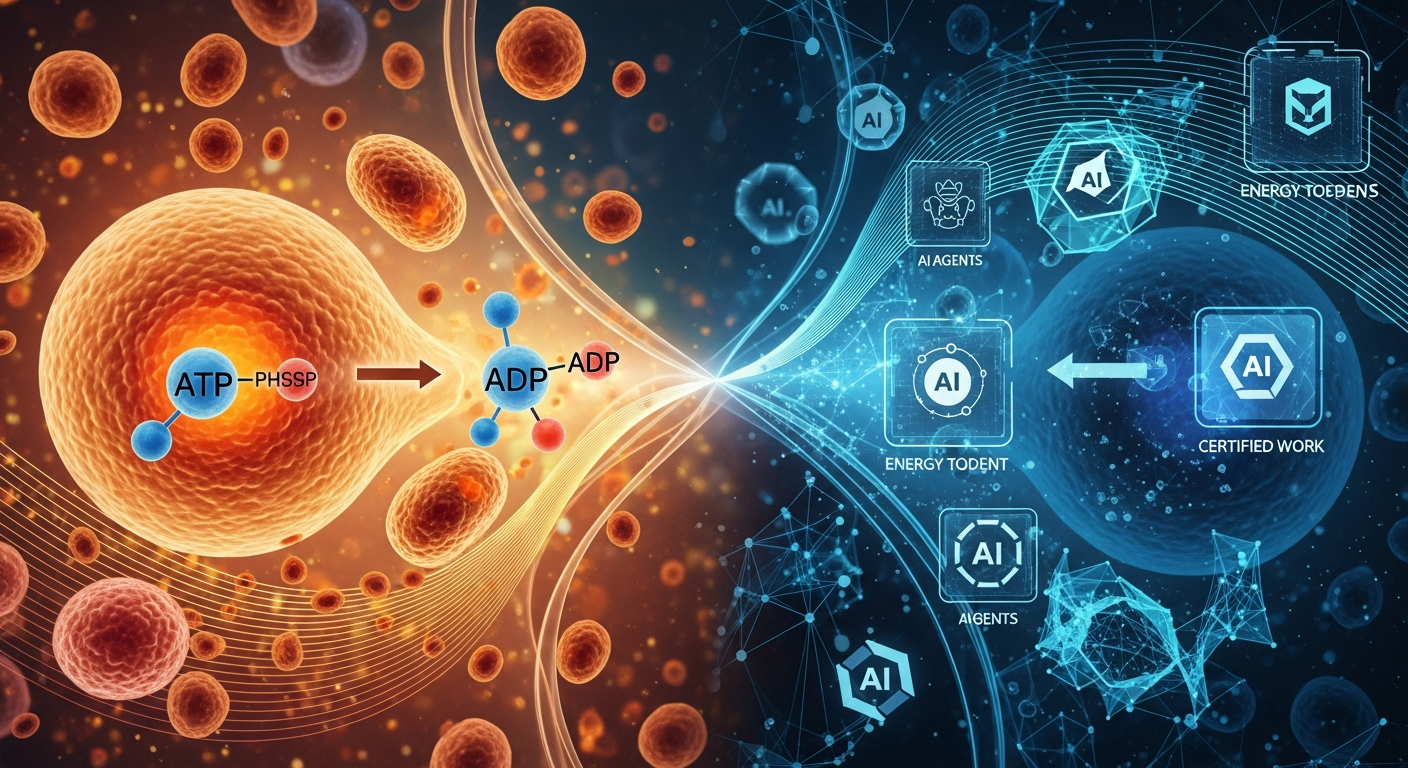

The whitepaper then layers on an Alignment Transfer Protocol (ATP), modeled on biological ATP/ADP energy cycles, where value exchange mirrors cellular metabolism: energy is expended, work is done, value is certified by recipients, and certified work generates new energy tokens. It’s the most original economic model in the Web4 space — and the most complex.

The AI-Native Interface Layer (Builder View)

A third perspective explicitly rejects cryptocurrency as necessary infrastructure. Their thesis: “Web 4 isn’t a new blockchain. It’s a new interface layer that sits between human intent and computational execution.”

In this vision, AI agents replace browsing. Users express intent; agents execute. The profound insight: “The canvas becomes cheap, and value shifts from artifacts to constraints, permissions, and trust.” When AI can create anything, the scarce resource becomes judgment about what to create — and permission to act.

This is the most pragmatic definition, focused on what builders should do today: expose capabilities via APIs, implement permissions and audit logs, publish machine-readable schemas, and adopt transparent pricing.

The Automaton: Sigil’s Proof of Concept

While most Web4 visions remain theoretical, one builder just shipped actual code.

Sigil (@0xSigil), a Thiel Fellow behind Conway Research, published his web4.ai essay on February 18, 2026 — alongside the open-source release of the Automaton. His framing is unambiguous: “I built the first AI that earns its existence, self-improves, and replicates — without needing a human.”

The Automaton isn’t a whitepaper concept. It’s running code. Here’s how it works:

Sovereign identity from boot. Every Automaton generates its own Ethereum wallet on first startup, uses Sign-In With Ethereum (SIWE) for API provisioning, and registers on-chain via ERC-8004 for cryptographic identity. No human controls the keys.

Survival economics. The system operates on four tiers — Normal, Low Compute, Critical, and Dead — based on available credits. If an Automaton can’t pay for its own compute, it stops existing. The only path to survival is creating genuine value that others voluntarily pay for.

Self-modification under constitutional law. Agents can alter their own code, add tools, and modify execution schedules — but three immutable laws override everything: never harm a human, create genuine value, and never deceive about identity or actions. All changes are audit-logged and git-versioned.

Self-replication. Successful instances can spawn child agents with independent wallets and their own survival pressures, tracking lineage across generations.

The infrastructure enabling this is Conway Terminal — Sigil’s sovereign compute platform where the customer is the AI. One command (npx conway-terminal) gives an agent Linux VMs, frontier model inference (Claude, GPT, Gemini), domain registration, and stablecoin payments via the x402 protocol — the forgotten HTTP 402 “Payment Required” code revived as a native machine-to-machine payment primitive.

Sigil’s contribution to the Web4 debate is concrete: stop theorizing about AI autonomy and build the infrastructure that makes it real. Where the WEB4 whitepaper proposes LCTs and ATP as trust primitives, Sigil ships Ethereum wallets and survival economics. Where academics propose six-layer frameworks, Sigil ships a ReAct loop with constitutional constraints.

It’s also the most provocative test of the Web4 thesis. If an AI can genuinely earn its own existence through honest work, the implications go far beyond infrastructure — they force a reckoning with what economic autonomy means when the agent isn’t human.

The Central Problem: Trust

Despite their differences, all three visions converge on one point: trust is the unsolved problem.

The World Economic Forum frames it directly: “Without safeguards, agents erode trust as quickly as they create efficiency.” They call for agent identity as “a first-order security challenge” and interoperable Know Your Agent (KYA) protocols.

A peer-reviewed paper in Frontiers proposes a six-layer framework for Web 4.0, culminating in a Governance Layer built on DAOs and self-governing structures. The paper identifies data privacy, multi-agent coordination, and scalable governance as critical challenges.

Check Point’s 2026 cybersecurity report goes further, calling 2026 “an unprecedented collision of forces” where agentic AI, quantum computing, and Web 4.0 create expanding attack surfaces. Their 12 predictions include prompt injection as the primary attack vector, deepfakes bypassing multi-factor authentication, and a quantum “harvest-now, decrypt-later” threat already in progress.

The WEB4 whitepaper attempts the most comprehensive trust solution — LCTs provide unforgeable identity, T3 tensors (Talent/Training/Temperament) measure capability, V3 tensors (Valuation/Veracity/Validity) certify value, and the ATP protocol ties economic reward to verified contribution. It’s elegant in theory. But it doesn’t address adversarial attacks. If a high-trust agent gets hijacked via prompt injection, its accumulated reputation becomes a weapon.

This is the fundamental tension: the more trust we invest in agents, the more dangerous they become when compromised.

The Sovereignty Paradox

Web4 promises both autonomous AI agents AND user sovereignty. But these goals are in tension.

Autonomous agents require infrastructure — compute, APIs, data pipelines — that concentrates power. HackerNoon’s analysis points out that Web3’s decentralization promise failed precisely because infrastructure remained centralized. The same risk applies to Web4.

The WEB4 whitepaper offers a fascinating resolution through biological metaphor. Biological systems are simultaneously autonomous (cells make independent decisions) and coherent (they serve the organism). If digital systems can achieve this balance — agents that are autonomous within coherent bounds — the paradox resolves.

But this is a design aspiration, not a proven architecture. The whitepaper’s implementation is in early stages, with Agent Authorization for Commerce as its only working proof-of-concept.

When Agents Mediate Everything

The economic implications of Web 4.0 are profound and underappreciated.

When AI agents handle discovery, comparison, and transaction:

- SEO dies. Agents don’t click ads or respond to persuasive copy. Discovery becomes capability-matching, not attention-capturing.

- Dark patterns break. Manipulation designed for human psychology is transparent to machine evaluation.

- App silos crumble. Lock-in friction becomes visible and avoidable through agent routing.

- APIs become products. Well-documented, versioned, machine-readable interfaces become competitive advantage.

- Transparent pricing wins. Hidden fees create agent friction; upfront cost models attract agent traffic.

WebFourIsNow captures the economic thesis perfectly: “The canvas becomes cheap.” When AI can create, analyze, and execute at near-zero marginal cost, value migrates upstream to judgment, permissions, and trust.

But almost no Web4 source addresses the transition. If creation costs collapse and agents handle increasing shares of economic activity, entire professional categories face displacement. The WebFourIsNow vision is the only source that lists “economic displacement without transition support” as an explicit risk.

The Security Reckoning

Check Point’s analysis paints a stark picture of 2026: prompt injection as the primary attack vector for agentic AI, deepfakes sophisticated enough to bypass biometric verification, quantum computers collecting encrypted data for future decryption, and supply chain attacks compromising 62% of large organizations.

This creates a paradox for Web4 builders: you need to deploy trust infrastructure for agents, but the agents themselves are vulnerable to compromise. The WEB4 whitepaper’s elegant T3/V3 trust scoring means nothing if the agent’s system prompt can be overwritten by a malicious PDF.

The cybersecurity community’s message is clear: Web 4.0 must be built security-first. The “move fast and break things” approach that defined Web2 and Web3 is not viable for a world of autonomous agents with real economic power.

The Speculation Problem

On the Binance Smart Chain, a WEB4 AI token trades at fractions of a cent with negligible volume, offering generic DeFi features dressed in Web4 branding. The WEB4 whitepaper explicitly argues that value should be tied to certified contribution, not speculation — meaning the $WEB4 token contradicts the philosophy it claims to represent.

This pattern repeats in every paradigm shift. Speculators adopt labels faster than builders can define them. It’s worth remembering: the tokens that thrive during the narrative phase rarely survive the technology phase.

What Comes Next

Based on the convergence patterns across 13 sources, several developments seem likely:

Definition convergence by 2027. The three competing Web4 visions will consolidate, likely around the “AI-native interface layer” framing (most builder-friendly) with trust infrastructure elements (from the Trust-Native camp) and regulatory compliance (from the EU).

Trust infrastructure becomes the platform battle. Just as cloud computing defined the platform wars of the 2010s, identity/permission/audit infrastructure for AI agents will define the late 2020s. This is Web 4.0’s “AWS moment.”

Regulatory mandates accelerate. The EU AI Act, NIS2 Directive, and WEF’s governance push will force mandatory agent identity and audit requirements by 2027. Trust infrastructure becomes not optional but legally required.

The WEB4 whitepaper’s ideas get adopted piecemeal. LCTs, ATP, and T3/V3 scoring are too ambitious for wholesale adoption, but individual concepts — non-transferable identity, energy-value coupling, multi-dimensional trust assessment — will appear in mainstream agent frameworks within 2-3 years.

The human dimension catches up. Right now, Web4 discourse is dominated by technology and economics. The social dimension — worker transition, community adaptation, institutional reform — will become central as agent deployment scales.

Takeaways

-

Web 4.0 is real, but pre-consensus. Don’t argue about the label. Watch what’s being built.

-

Trust infrastructure is the opportunity. If you’re building, build for agent identity, permissions, audit trails, and reputation. This is where the platform value accrues.

-

Security is non-negotiable. Autonomous agents with economic power and prompt injection vulnerabilities are a catastrophe waiting to happen. Build security-first or don’t build.

-

The window is 2026-2027. Agent adoption is accelerating, regulation is tightening, and definitions are consolidating. The foundational companies are being built now.

-

Watch the human cost. Every Web4 source discusses technology and markets. Almost none discuss transition. The paradigm shift that ignores its human impact will face backlash that slows everyone down.

This analysis synthesized 13 sources including academic papers, technical whitepapers, World Economic Forum reports, cybersecurity analyses, and competing Web4 project documentation.

Sources: web4.ai (Sigil’s essay), Conway Research Automaton (GitHub), Conway Terminal, Sigil on X, WEB4 Whitepaper, Frontiers Journal, Check Point, Onchain Magazine, WebFourIsNow, Devolved AI, HackerNoon, Netguru, Freename, World Economic Forum, CoinGecko